How We Replaced a Leading Newsletter Platform with an In-House System and Saved ~$150K a Year

Email is one of those systems that feels deceptively simple — until the invoice arrives.

For us, sending daily newsletters to roughly 1.5 million users through a leading customer engagement platform was costing close to $200K per year. The platform worked well, but when we broke it down, the core workflow was straightforward:

Store content → choose an audience → send email → track engagement

We already had:

- A leading analytics / CDP platform for user data, segmentation, and reporting

- A mature React-based product ecosystem

- An internal editorial workflow

So we asked a simple question:

What if we owned the pipeline and only paid for the pieces we couldn’t — or didn’t want to — build ourselves?

A year later, we send the same volume on an in-house platform for ~$50K per year, saving ~$150K annually, while retaining everything that actually matters: segmentation, scheduling, analytics, and a solid editor experience.

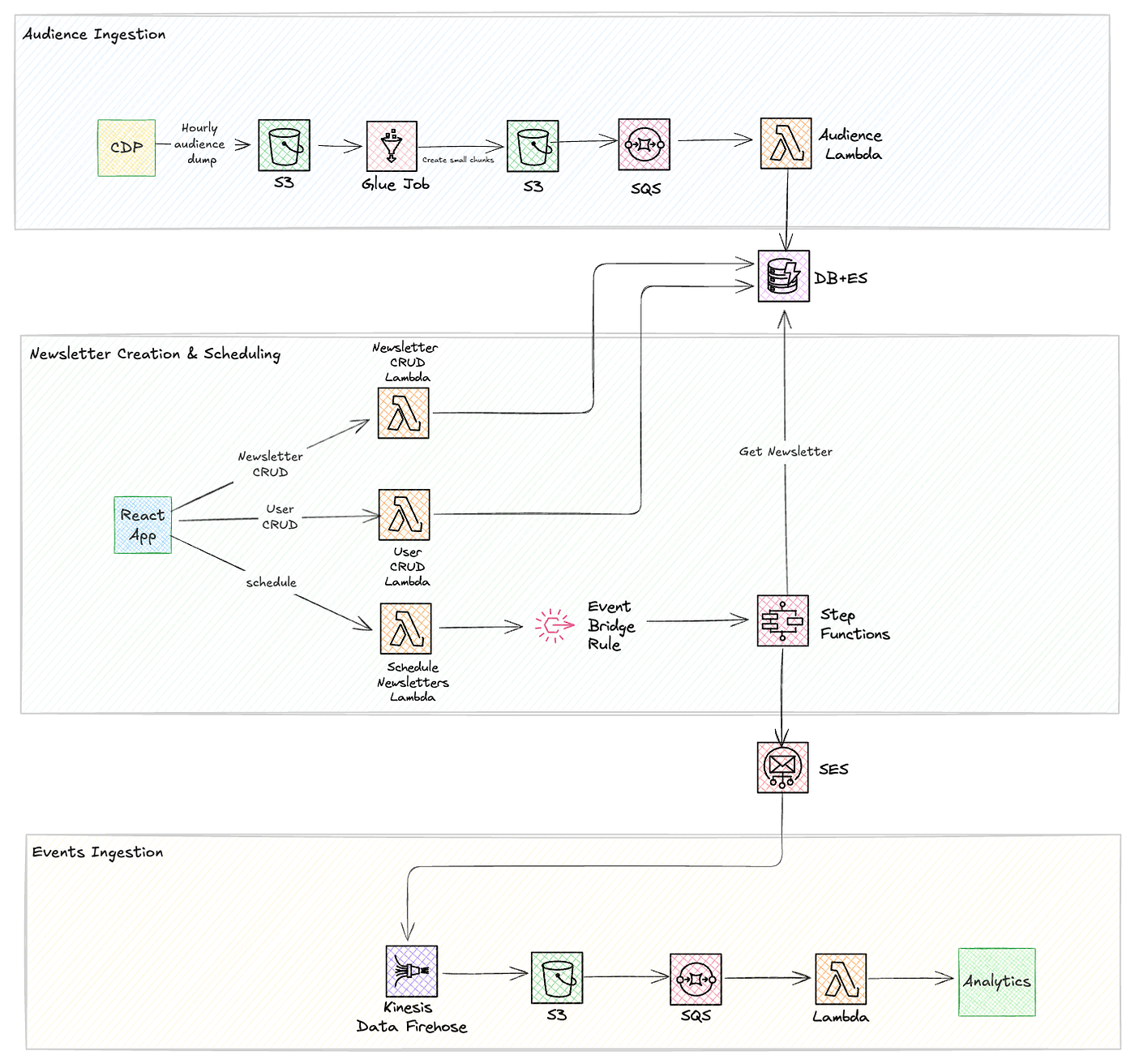

Here is the high level architecture and I will explain the flows one by one.

Audience ingestion Flow

- CDP exports audience segments on a schedule (e.g. hourly).

- Each export is a CSV (or similar) with user/audience fields (e.g. cdp_id, user_id, email, segment/flags). That file is written into an S3 bucket.

- So the source of truth for “who is in which audience” stays in CDP; ingestion is a scheduled dump → S3.

- A Glue job (e.g. PySpark) runs when new data lands (triggered from S3 via a Lambda).It reads the CSV from the first S3 location, splits it into small, fixed-size chunks (e.g. 5,000 rows per chunk in your code).

- Chunking avoids having a few huge files and lets the next stage process in parallel, one chunk per message.

- S3 Object Created on that bucket (or prefix) is sent to SQS (e.g. AudienceQueue) via S3 event notifications. Each new chunk file becomes one (or more) SQS messages describing “which S3 object to process.”

- SQS gives you reliable, async processing: if a processor fails, the message can be retried or go to a DLQ.

- A Lambda is invoked by SQS for each message.

- For each message it: Reads the chunk CSV from S3 (using bucket/key from the message). Writes these records in bulk to your audience store.

Newsletter Creation & Scheduling Flow

- React App (admin UI) — Editors create and edit newsletters (templates, content, folders), manage users, and choose “schedule” for a newsletter. All of this goes through the React admin app (Material-UI, React Email Editor, etc.)

- Newsletter CRUD Lambda — The React app talks to a Newsletter Management Lambda for create/read/update/delete of newsletters. It writes metadata to DynamoDB (e.g. newslettersTable) and HTML/content to S3 (e.g. newsletter archive bucket).

- User CRUD Lambda — User and permission management (e.g. who can create/schedule newsletters) is handled by a User Management Lambda, which also uses DynamoDB (and, where relevant, your DB+ES layer) for persistence.

- Data layer (DB + ES) — Newsletter metadata and user data live in DynamoDB; large or searchable content can also involve S3 and Elasticsearch. Audience/segment data used at send time comes from Elasticsearch (fed by the audience ingestion pipeline).

- Schedule Newsletter Lambda — When someone clicks “schedule” in the React app, the request hits a Schedule Newsletter Lambda. That Lambda stores the schedule (e.g. in DynamoDB/state) and creates or triggers an EventBridge rule (e.g. cron) so the send runs at the right time.

- Step Functions (orchestration) — The state machine runs the send flow: it invokes sendAudienceOnQueueLambda (which gets audience from ES and pushes batches to SQS), then readQueueAndSendNewsletterLambda (which gets newsletter content from DB/S3 and sends via SES).

- Retrieval and delivery — Within Step Functions, newsletter content is loaded from S3/DynamoDB, recipients from Elasticsearch (and/or DB). Emails are sent through AWS SES; tracking events (opens, clicks, bounces) go to Firehose/S3 and into analytics.

Event Ingestion Flow

- Event source (SES) — Email events (sends, deliveries, opens, clicks, bounces, complaints) are emitted by Amazon SES.

- SES is configured with an event destination that forwards these events into the pipeline.

- Streaming ingestion (Kinesis Data Firehose) — SES publishes events to Kinesis Data Firehose. Firehose buffers and batches the stream and provides at-least-once delivery into durable storage without you managing stream consumers.

- Landing in S3 — Firehose delivers the batched events to an S3 bucket (e.g. the newsletter tracking events bucket).

- Events are routed from S3 to SQS. That gives decoupled processing: each new object (or batch) becomes a message so a Lambda can process asynchronously with retries and backpressure.

- Event processing (Lambda) — An AWS Lambda (e.g. processNewsletterTrackingEventsLambda) is invoked per S3 object (or per SQS message if used). It reads the file, parses SES JSON (e.g. by eventType: Delivery, Open, Click, Bounce, Complaint), maps them to your analytics schema, and forwards them to the analytics platform. For hard bounces it can also send unsubscribe/suppression events.

- Analytics destination — The Lambda sends the processed events to your analytics system. There you get engagement metrics (opens, clicks), deliverability (bounces, complaints), and can build segments and reports on email performance.

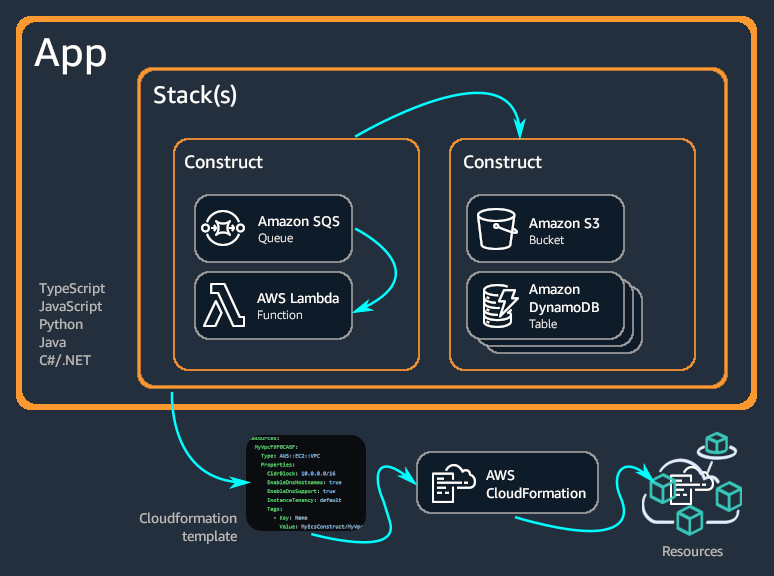

Defining infrastructure using AWS CDK

The AWS Cloud Development Kit (AWS CDK) is an open-source software development framework for defining cloud infrastructure in code and provisioning it through AWS CloudFormation.

Benefits of CDK are -

- Develop and manage your infrastructure as code (IaC)

- With the AWS CDK, you can use any of the following programming languages to define your cloud infrastructure: TypeScript, JavaScript, Python, Java, C#/.Net, and Go.

- AWS CDK integrates with AWS CloudFormation to deploy and provision your infrastructure on AWS.

- Develop faster by using and sharing reusable components called constructs. Use low-level constructs to define individual AWS CloudFormation resources and their properties.

- Single stack, reusable constructs — One stack composes DynamoDB, S3, SQS, Lambdas, Step Functions, EventBridge, Firehose, SES, API Gateway, CloudFront; each piece lives in a construct so the stack stays readable and reusable.

- Deploy and diff — cdk deploy and cdk diff; rollback = revert + redeploy; environments are reproducible from code.

- Why it matters for this project — With many Lambdas, queues, Firehose, Glue, Step Functions, and SES, CDK is the single source of truth and makes dev/preprod repeatable.

- Example -

import * as cdk from 'aws-cdk-lib';

import { Stack, StackProps, Duration } from 'aws-cdk-lib';

import { Construct } from 'constructs';

import * as dynamodb from 'aws-cdk-lib/aws-dynamodb';

import * as s3 from 'aws-cdk-lib/aws-s3';

import * as sqs from 'aws-cdk-lib/aws-sqs';

import * as lambda from 'aws-cdk-lib/aws-lambda';

import * as apigw from 'aws-cdk-lib/aws-apigateway';

import * as stepfunctions from 'aws-cdk-lib/aws-stepfunctions';

import * as tasks from 'aws-cdk-lib/aws-stepfunctions-tasks';

import * as events from 'aws-cdk-lib/aws-events';

import * as targets from 'aws-cdk-lib/aws-events-targets';

import * as firehose from 'aws-cdk-lib/aws-kinesisfirehose';

import * as iam from 'aws-cdk-lib/aws-iam';

import * as cloudfront from 'aws-cdk-lib/aws-cloudfront';

import * as origins from 'aws-cdk-lib/aws-cloudfront-origins';

import * as ses from 'aws-cdk-lib/aws-ses';

export class NewsletterPlatformStack extends Stack {

constructor(scope: Construct, id: string, props?: StackProps) {

super(scope, id, props);

/* ======================

DATA LAYER

====================== */

const newslettersTable = new dynamodb.Table(this, 'NewslettersTable', {

partitionKey: { name: 'newsletterId', type: dynamodb.AttributeType.STRING },

billingMode: dynamodb.BillingMode.PAY_PER_REQUEST,

});

const contentBucket = new s3.Bucket(this, 'NewsletterContentBucket', {

versioned: true,

encryption: s3.BucketEncryption.S3_MANAGED,

});

/* ======================

QUEUE

====================== */

const audienceQueue = new sqs.Queue(this, 'AudienceQueue', {

visibilityTimeout: Duration.minutes(5),

});

/* ======================

LAMBDAS

====================== */

const newsletterCrudLambda = new lambda.Function(this, 'NewsletterCrudLambda', {

runtime: lambda.Runtime.NODEJS_18_X,

handler: 'index.handler',

code: lambda.Code.fromInline('exports.handler = async () => {};'),

environment: {

TABLE_NAME: newslettersTable.tableName,

BUCKET_NAME: contentBucket.bucketName,

},

});

newslettersTable.grantReadWriteData(newsletterCrudLambda);

contentBucket.grantReadWrite(newsletterCrudLambda);

const sendAudienceLambda = new lambda.Function(this, 'SendAudienceLambda', {

runtime: lambda.Runtime.NODEJS_18_X,

handler: 'index.handler',

code: lambda.Code.fromInline('exports.handler = async () => {};'),

environment: {

QUEUE_URL: audienceQueue.queueUrl,

},

});

audienceQueue.grantSendMessages(sendAudienceLambda);

const sendEmailLambda = new lambda.Function(this, 'SendEmailLambda', {

runtime: lambda.Runtime.NODEJS_18_X,

handler: 'index.handler',

code: lambda.Code.fromInline('exports.handler = async () => {};'),

environment: {

BUCKET_NAME: contentBucket.bucketName,

},

});

contentBucket.grantRead(sendEmailLambda);

audienceQueue.grantConsumeMessages(sendEmailLambda);

/* ======================

STEP FUNCTIONS

====================== */

const enqueueAudience = new tasks.LambdaInvoke(this, 'EnqueueAudience', {

lambdaFunction: sendAudienceLambda,

});

const sendEmails = new tasks.LambdaInvoke(this, 'SendEmails', {

lambdaFunction: sendEmailLambda,

});

const stateMachine = new stepfunctions.StateMachine(this, 'NewsletterStateMachine', {

definition: enqueueAudience.next(sendEmails),

timeout: Duration.minutes(30),

});

/* ======================

EVENTBRIDGE (SCHEDULING)

====================== */

const scheduleRule = new events.Rule(this, 'NewsletterScheduleRule', {

schedule: events.Schedule.rate(Duration.hours(1)),

});

scheduleRule.addTarget(new targets.SfnStateMachine(stateMachine));

/* ======================

API GATEWAY

====================== */

const api = new apigw.RestApi(this, 'NewsletterApi');

const newsletters = api.root.addResource('newsletters');

newsletters.addMethod('POST', new apigw.LambdaIntegration(newsletterCrudLambda));

newsletters.addMethod('GET', new apigw.LambdaIntegration(newsletterCrudLambda));

/* ======================

SES (EMAIL)

====================== */

const sesIdentity = new ses.EmailIdentity(this, 'SesIdentity', {

identity: ses.Identity.domain('example.com'),

});

/* ======================

FIREHOSE (EVENT INGESTION)

====================== */

const firehoseRole = new iam.Role(this, 'FirehoseRole', {

assumedBy: new iam.ServicePrincipal('firehose.amazonaws.com'),

});

contentBucket.grantWrite(firehoseRole);

new firehose.CfnDeliveryStream(this, 'SesEventsFirehose', {

deliveryStreamType: 'DirectPut',

s3DestinationConfiguration: {

bucketArn: contentBucket.bucketArn,

roleArn: firehoseRole.roleArn,

},

});

/* ======================

CLOUDFRONT (ADMIN UI)

====================== */

const adminUiBucket = new s3.Bucket(this, 'AdminUiBucket', {

websiteIndexDocument: 'index.html',

publicReadAccess: false,

});

const distribution = new cloudfront.Distribution(this, 'AdminUiDistribution', {

defaultBehavior: {

origin: new origins.S3Origin(adminUiBucket),

},

});

/* ======================

OUTPUTS

====================== */

new cdk.CfnOutput(this, 'ApiUrl', { value: api.url });

new cdk.CfnOutput(this, 'CloudFrontUrl', {

value: `https://${distribution.domainName}`,

});

}

}CDK gives you full control over AWS resources, policies, permissions without touching the AWS console. Exciting.. right?

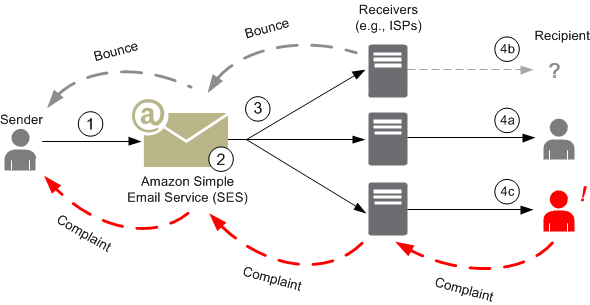

Lets now talk about the Amazon Simple Email Service (AWS SES).

It is a scalable, cost-effective, and reliable cloud email service provided by Amazon Web Services. It is designed to help businesses send transactional emails, marketing emails, and bulk newsletters while maintaining high deliverability and compliance.

High Deliverability

- Built-in reputation management and feedback loops

- Integration with ISPs to reduce spam filtering

- Supports DKIM, SPF, and DMARC for domain authentication

Managed vs Dedicated IPs

- Managed IPs: AWS handles IP reputation automatically (ideal for startups and new platforms)

- Dedicated IPs: Full control over sending reputation for high-volume senders

- IP Warm-up required when sending bulk emails for the first time

We used Managed IPs as it was hassle free

Scalability

- Can handle millions of emails per day

- Automatic scaling without manual infrastructure planning

- Works seamlessly with event-driven AWS services

Event Tracking & Analytics

SES can publish events such as:

- Delivered

- Opened

- Bounced

- Complaints

- Rejected

These events integrate cleanly with:

- Amazon Kinesis Firehose

- Amazon S3

- Amazon SNS / SQS

- AWS Lambda

This makes SES ideal for newsletter analytics and engagement tracking.

Learnings

- CDK-based deployments integrated with GitHub Actions significantly reduced deployment time and improved release consistency.

- Amazon Kinesis Firehose proved effective for ingesting high-volume event data, while the S3 + SQS + Lambda pipeline handled downstream processing reliably and at scale.

- AWS infrastructure can be fully managed as code using CDK, enabling repeatable, version-controlled, and maintainable cloud environments.

- AWS SES managed IPs simplified email deliverability by offloading IP reputation and management for the newsletter domain.

- Initial IP warm-up is mandatory when sending bulk newsletters for the first time to establish sender reputation and ensure high inbox placement.

Final Thoughts

We didn’t out-engineer a marketing platform — we re-scoped the problem.

By combining:

- A cloud email service AWS SES

- An existing analytics/CDP platform

- Event-driven, serverless infrastructure

- A small React admin app

We replaced a large portion of a third-party newsletter platform with something cheaper, simpler, and better aligned with how our teams work.

If you’re spending heavily on email tooling and already have strong analytics and engineering foundations, a focused in-house newsletter system may be far more achievable — and cost-effective — than it first appears.

I tried to capture the high level details in this article. Let me know if you are interested in more details.

Comments

Post a Comment